Workers AI

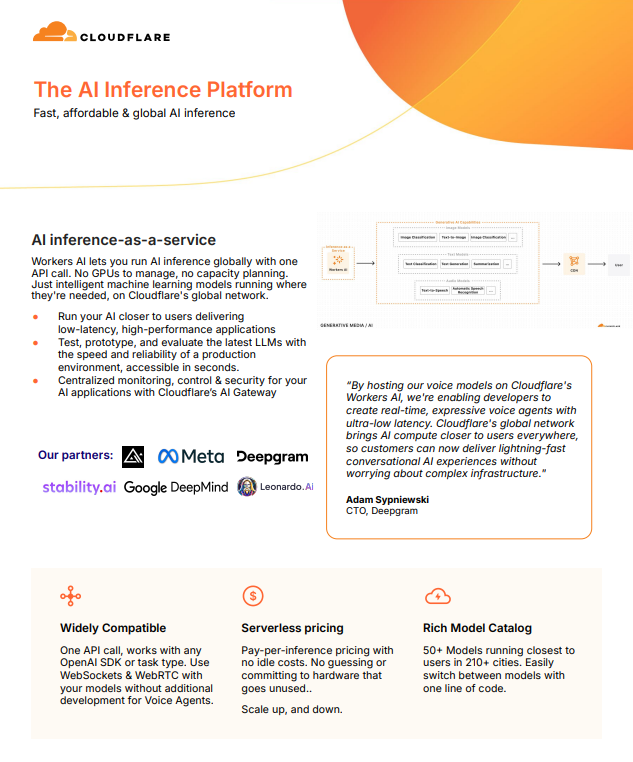

Cloudflare’s Workers AI redefines AI deployment with a fully managed, global inference platform—enabling developers to run machine learning models with a single API call, without the complexity of GPUs or infrastructure management.

Deliver ultra-low latency AI experiences by running models closer to users, backed by a vast global network spanning hundreds of cities and milliseconds-level response times for most of the world.

With flexible pay-per-inference pricing, a rich catalogue of 50+ models, and seamless integration with existing tools, it simplifies scaling, experimentation, and production deployment.

Discover how to build faster, smarter AI applications—download the full whitepaper to explore the possibilities.